Becoming a data-driven organization means using available data to make informed business decisions, but there’s an important prerequisite: the available data needs to be correct. To achieve a high level of accuracy and consistency, organizations often turn to a set of core data entities known as “master data.”

Master data is the foundation for an organization’s data-driven activities, providing a consistent view of critical data such as customer information, product details, supplier data, and employee records. The practice of improving your organization’s data quality by ensuring that identifiers and key data dimensions about entities are accurate and consistent is called master data management (MDM).

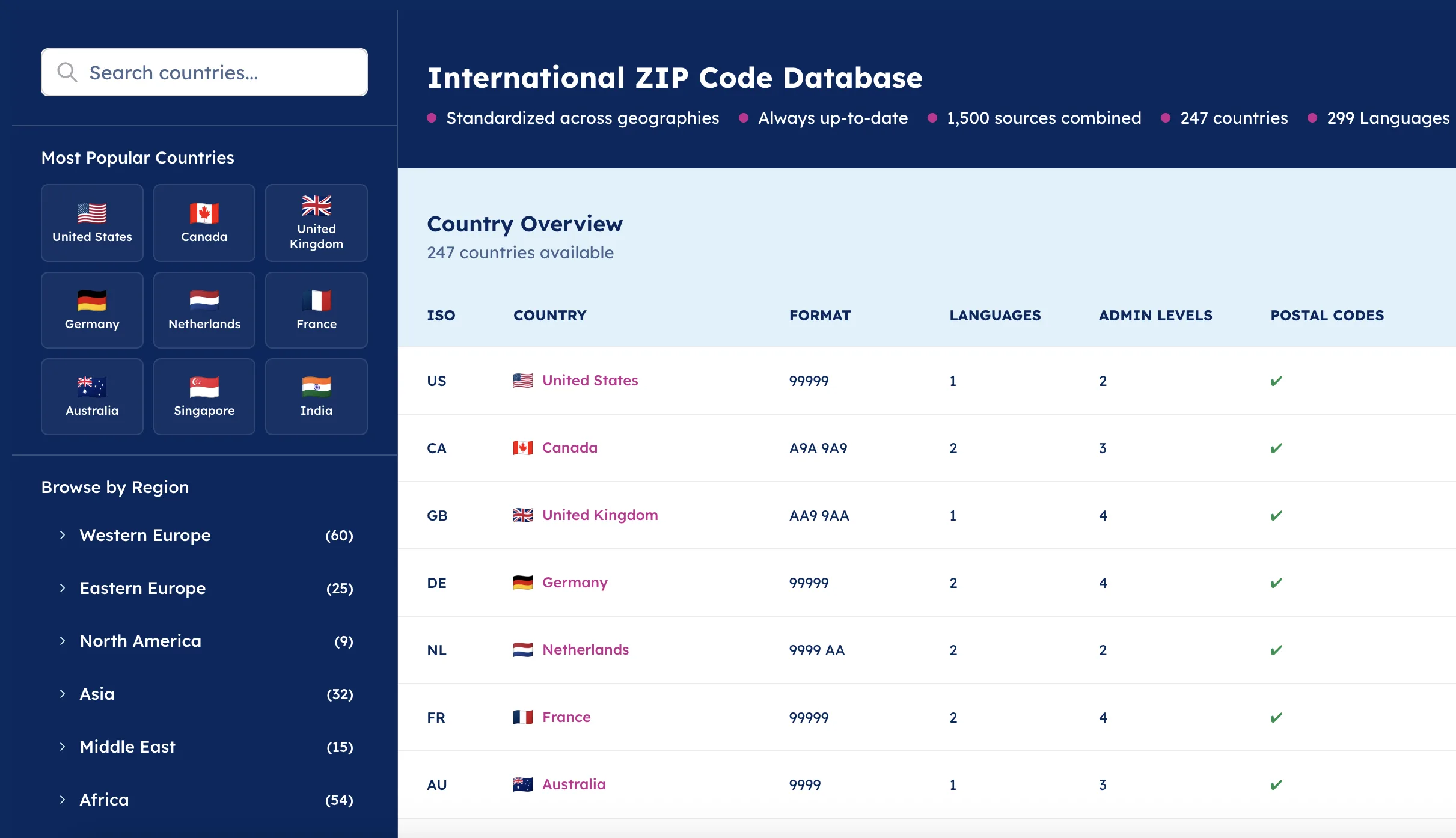

💡 For over 15 years, we have created the most comprehensive worldwide zip code database. Our location data is updated weekly, relying on more than 1,500 sources. Browse GeoPostcodes datasets and download a free sample here.

While it seems obvious that data quality is important, defining your master data management strategy is not an endeavor to be taken lightly. If your organization currently uses a more ad-hoc approach to data management (e.g., every department handles data differently, data sanitization practices are unclear, etc.), it’s certain that your data somewhere doesn’t match expectations and is likely leading your teams astray.

In this article, you’ll learn what an MDM strategy is, why you need it, and the components of a strong MDM strategy. You’ll also learn about the tools and organizational structures required to implement an MDM, regardless of company size or industry.

💡Want to calculate your specific exposure? Use our ROI calculator to quantify the potential impact on your organization.

Why You Need an MDM Strategy

Data is likely being produced in all corners of your organization. Consequently, many stakeholders are involved. Before companies adopt an MDM strategy, they might be said to have an “ad-hoc” data management approach, meaning that each department or team handles data in its way.

An ad-hoc approach often leads to failure because individual initiatives operate in isolation. Instead of working towards common goals, departments or teams end up working in silos, duplicating efforts, and wasting resources.

Ad-hoc data management strategies focus on technology rather than business effort or results. This may lead to a situation where technology solutions are implemented without a clear understanding of the business requirements, resulting in costly investments in technology that do not deliver the intended value.

What is an MDM Strategy?

A master data management strategy manages and maintains a centralized, consistent, and accurate view of the data used across an organization. This helps ensure data quality, accuracy, and consistency across business units. Teams leading MDM efforts are taking on significant responsibility, and their success can substantially impact an organization.

An MDM strategy typically considers the following areas:

Data Governance

Governance is designed to ensure data quality, consistency, and accuracy. It involves defining data standards, identifying data owners, and establishing data use and access guidelines. Questions that should be answered include:

- What are the critical data elements (CDEs) that need to be managed across the organization, and who owns them?

- What are the data retention policies, and how are they enforced to ensure compliance with regulations?

- What are the procedures for managing data changes and updates, and how are they documented and tracked?

- How is data governance enforced and audited to ensure compliance and adherence to data policies and standards?

Data Modeling

Modeling involves defining an organization-wide data model that clearly understands the relationships between different entities. It ensures that data is organized consistently and logically across the organization and answers questions like:

- How will data entities be identified and defined (including their key attributes) in the organization’s data model?

- What are the relationships between different data entities, and how will these relationships be represented?

- What are the rules for creating, updating, and deleting data entities, and how will these rules be enforced?

Data Quality

Overseeing data quality requires data quality standards, identifying existing and potential data quality issues, and implementing processes to improve data quality over time. It will answer questions like:

- How will data quality be measured and monitored?

- What are the consequences and costs of poor data quality?

- How will data quality metrics be communicated across the organization?

- What processes will be put in place to ensure ongoing data quality?

Data Integration

As your number of data sources grows, integrating this data from across your organization becomes an increasingly critical job. As you consider this part of your MDM strategy, consider:

- What are the different data sources within the organization?

- How might these data sources vary or be misaligned?

- What data integration technologies and tools can be used to automate this process?

Data Security

Finally, your MDM strategy should define the security measures to protect data from unauthorized access, loss, or corruption. This will answer questions like:

- Who has access to the master data, and how is access granted and managed?

- How is data encrypted, and what key management processes are in place to secure data?

- How is data backed up and restored during a data loss or security breach?

- How is data privacy and confidentiality maintained, and does it vary by region as legal requirements vary?

I’ve already hinted at some of the benefits of having an MDM, but we’ll look at them more deeply in the next section.

Benefits of Having an MDM Strategy

In an ad-hoc approach, business requirements are often defined as a posteriori resulting from progressive insight.

Since an MDM pinpoints the current state of data management and focuses on the business and functional requirements, the target state is clear from the start. The result is that fewer resources are wasted because the priorities and the accompanying efforts are all part of an established roadmap to reach the target state.

With all business and functional requirements outlined, defining an adequate MDM technology stack is much easier, whether it results in a build or buy approach. Requests for proposals will be closer to the mark, a proof of concept will generate business value faster, and the evaluation of various solutions should become a much simpler exercise.

Finally, with the current state, the target state, the technology stack, and the roadmap defined, the definition of success and the metrics used to measure it can easily be formulated. Some typical MDM-related metrics are:

- The number of identified inaccuracies in the data

- The percent of data products containing measures and metrics to evaluate their adoption and accuracy

- The speed and efficiency of data reconciliation processes

- The number of duplicate records in the system

- The frequency and number of data requests from business units

- The number of data quality issues reported and resolved

- The number of successful data merges

Finally, by implementing an MDM strategy, you can help your entire organization see the benefits they’ll get by working together to carry the strategy out.

Drafting an MDM Strategy

Rather than reinventing the wheel, organizations should use established best practices and frameworks to define their master data management strategy. The COBIT Governance and Management Objectives provides a great starting point for building an MDM strategy, so that framework heavily influences this section.

Processes

In a previous section, I described how MDM involves many stakeholders because of the omnipresent character of data. This is why the number of processes that need to be established is very high. While these processes will vary based on the nature of your data and business, there are four I’d like to highlight:

1. Systematic Data Quality Approach

The most popular descriptions of data quality involve the following six dimensions:

- accuracy

- completeness

- consistency

- timeliness

- validity

- uniqueness

Since MDM is about establishing a golden standard for your organization’s data, an MDM strategy should contain the processes and techniques used to perform data quality tests.

A systematic approach to data quality implies that data is tested regularly. Typically, data testing can occur in three different phases:

- Tests on the serving layer

- Tests throughout the data processing pipelines

- Tests embedded in the data modeling

However, not all data quality issues are detected a priori. For the unknown unknowns, there should be a systematic collection and processing of data quality issues reported by stakeholders.

Finally, you must consider the source of all your data and its impact on quality. For example, if your business relies on location data, it’s important to keep it up-to-date as boundaries, addresses, and postcodes change quite often. Reliable data sources (GeoPostcodes, for instance) can help, but your organization is ultimately responsible for all imported data, regardless of the source.

2. Systematic Data Cleansing Approach

Data cleansing is known by many names and done in many ways, but it is highly contingent on the chosen data integration paradigm: ETL or ELT.

The former cleanses data before loading it into the data warehouse, the latter loads everything into the data warehouse, and the data cleansing is part of data modeling. Data providers can be held accountable for the data products through SLAs by defining the data cleansing approach and standards.

3. Systematic Data Product Lifecycle Approach

The demand and supply of data assets are never in equilibrium. Generally, an enterprise’s number of data points is too much to maintain forever, especially if the dataset grows exponentially over time.

That’s why there should be a systematic approach to deciding which data is onboarded, cleansed, and served. Furthermore, the approach should define how and when data is documented and how changes are implemented.

4. Communication

Establishing processes that describe how and which data should be onboarded or cleansed is one thing, but making these processes clear to all stakeholders is another challenge. An important part of any MDM process is establishing how data issues are communicated to ensure that MDM efforts gain traction across the organization.

Organizational Structures

Besides the processes, there should be organizational structures that are accountable and responsible for their implementation.

A popular technique is the RACI matrix, which maps responsibilities to organizational roles. For most processes, a data management function (such as a Director of Data Management) should ultimately be accountable.

When implementing processes and managing policies, responsibilities could be set up horizontally or vertically.

A vertical approach implies that the data product lifecycle scopes responsibilities. Data cleansing policies are the responsibility of the data engineering manager, data quality policies are part of the business intelligence manager’s job, and the data lifecycle policies are assigned to the portfolio manager.

A horizontal approach implies that the data entities scope responsibilities. If each entity has a product owner (customer, product, partner, etc.), each product owner will be responsible for all policies, but only within their entity.

A horizontal approach can be more scalable, but it may also lead to divergence in implementation as each entity’s owner may interpret the strategy differently.

People, Skills, and Capabilities

Your MDM strategy should also outline the people, skills, or capabilities required for implementing and maintaining the master data. Essential skillsets include:

- Data and/or analytics engineering: extracting, loading, and transforming the data to turn it into a data product that can easily be consumed;

- Data analytics: supporting business stakeholders with their business questions regarding the data and how it should be interpreted or used;

- Data management: setting the principles and guidelines for the data, defining the data model, specifying the serving layer, etc.

Infrastructure

One of the most common pitfalls in an MDM effort is handling it exclusively from a technological lens. Tools should not dictate the strategy; strategies should dictate the tools.

Nevertheless, infrastructural decisions need to be made, and there are four architectural MDM paradigms to consider:

1. Registry

A registry approach is the most lightweight way to handle master data management. Instead of processing and cleaning the data, it lets it remain where it sits. It is read-only and creates a registry of existing data assets by matching data from various sources via unique keys. This approach holds the benefits that no data is duplicated, there are no regulatory implications, and no data is overwritten in source systems.

2. Consolidation

The big data revolution has enabled near-free storage and low-cost processing in the cloud, so consolidating data has started to top many enterprise priority lists. The benefits are that integrating data is unidirectional and fairly easy to manage. Many DataOps best practices are built around this data pipeline approach, in which data at rest (but nowadays often also in-flight) is cleaned and processed in a single stack to be consumed for analytical purposes.

3. Coexistence

A coexistence approach adds complexity to the consolidation approach. Instead of only synchronizing data from production systems to the central hub (often a data warehouse), the synchronization also happens in the other direction. This approach has two main benefits: every system has a single version of the truth, and the processed data can be used for operational purposes.

4. Transaction

Instead of duplicating data to a centralized hub, as in the consolidation or coexistence approach, the transaction approach sees the centralized hub as a data cleaning and enhancement tool. Source systems can subscribe to the hub to enhance or correct their data. The benefit is that you get to update/correct the data in the source systems via a transparent set of rules while also using it for operational purposes without compromising legal obligations due to data duplication.

Policies and Procedures

An MDM strategy should loosely define the policies that define how the MDM is handled. Some policies that may be defined include:

- Data cleansing policy: Prescribes frequency, guidelines, and accountability; documents available means (methods, solutions, and tools) through which data cleansing challenges are tackled

- Data management policy: Prescribes how a data product moves through its lifecycle, from ideation to creation, production, deployment, and archival

- Data quality assessment policy: Prescribes how data quality tests are implemented and what they test for

- Privacy Policy: Prescribes how personal data is handled and how regulatory obligations and procedures are followed.

Culture

A final aspect of a data management strategy is to outline the desired organizational culture to embed all previous components. Two cultural properties are relevant to MDM:

- Shared responsibility of data assets: although the accountability and responsibilities are clearly described within an MDM strategy, everyone is responsible for spotting and reporting data quality issues.

- Awareness around integrity, quality, accuracy & completeness: various efforts should be set up to ensure that all stakeholders know what to expect from the master data.

Conclusion

Hopefully, you can see that an ad-hoc approach to master data management has flaws and that a well-defined strategy is your best bet for achieving success with MDM. Data integrity and accuracy are paramount to maintaining business agility and profitability in the long run, so carefully considering your MDM strategy is essential.

Because data is omnipresent within modern organizations, your MDM strategy will influence many processes, actors, and organizational structures. Creating this strategy doesn’t happen overnight, but this article has given you a high-level blueprint for getting started.

To learn more about MDM tools, check out our article comparing the most popular ones.

Note: this article applies the APO02 and APO14 management objectives of the Control Objectives for Information and Related Technology (COBIT) IT governance control framework to MDM strategy.

What are master data management strategies?

There are three primary MDM strategies to achieve this.

First, Registry-based MDM involves creating a central registry or index of master data. It acts as a reference point to link and access data from various sources without physically consolidating it.

Second, Hub-based MDM entails establishing a centralized master data repository, often called a data hub, where data from diverse sources is integrated and harmonized.

Lastly, Coexistence-based MDM recognizes that different departments may have distinct master data needs, allowing them to maintain their repositories while facilitating data sharing through a central coordination layer.

How do you implement a master data management strategy?

First, define clear objectives and goals for your MDM initiative, outlining what you aim to achieve. Next, assess your existing data sources and quality, identifying areas for improvement. Then, select the appropriate MDM technology and tools that align with your organization’s needs.

Lastly, establish data governance policies and procedures to ensure data quality, consistency, and compliance while continuously monitoring and refining your MDM strategy to adapt to evolving data requirements and business goals.

What are the five core functions of master data management?

Data Collection: Gathering data from various sources across the organization, ensuring accuracy and completeness.

Data Transformation: Standardizing and harmonizing data to ensure consistency and uniformity.

Data Integration: Combining and linking data from disparate sources to create a single, reliable master data repository.

Data Quality Management: Implementing processes to maintain data accuracy, completeness, and reliability over time.

Data Governance: Establishing policies, procedures, and ownership roles to ensure ongoing data management, compliance, and security.

What are the five components of master data management?

These components collectively contribute to effective master data management: data governance ensures data quality and compliance; data modeling establishes consistent structures; data quality focuses on measurement and improvement; data integration handles diverse data sources; and data security safeguards against unauthorized access and breaches in an MDM strategy.

What is the difference between ETL and MDM?

ETL (Extract, Transform, Load) focuses on data integration and preparation, while MDM (Master Data Management) centers on maintaining the quality and consistency of core data entities.

ETL ensures data is ready for analysis, while MDM governs and manages master data for accuracy and reliability. They work together, with ETL feeding clean data into MDM systems for effective data governance.