Key takeaways

- Building a global postal database in-house is not a one-time project. It becomes a permanent financial and governance obligation.

- Sourcing is harder than it looks. Official sources sometimes conflict, some markets restrict data access entirely, and in high-volume markets zip codes change faster than most teams can track.

- Stitching multiple sources together creates structural fragmentation due to varying data structures.

- In-house maintenance requires a minimum of 4 FTEs, which is $240,000–$360,000 per year, before setup costs.

- Gartner estimates poor data quality costs large enterprises $15 million per year. In-house builds are a direct path to that exposure.

- Licensing a standardized global dataset converts that risk into a predictable, fixed-cost infrastructure input.

Introduction

Should we license our ZIP code data from a provider, or build and maintain it in-house? This is a question that often comes up when deciding the future of a company’s location data.

The instinct to build is understandable. An in-house database offers control and the flexibility to customize data structures. The economics look contained. The scope looks manageable.

Yet, what begins as a simple sourcing project will become a permanent commitment. Based on US average salaries, staffing that runs a minimum of $240,000 to $360,000 per year. That’s before setup, quality assurance, or data standardization.

Gartner estimates that poor data quality costs large enterprises $15 million per year. Building an in-house database will carry unexpected hidden costs.

This article examines why building an in-house location database poses financial and operational risks and the impact it entails.

The sourcing reality: Why “just download the data” doesn’t work

ZIP code data looks straightforward: codes, cities, administrative divisions, and coordinates. Most countries publish data through a national postal operator. Some of it is free. The initial sourcing step looks like a few hours of work.

That impression is where most internal build projects first run into trouble.

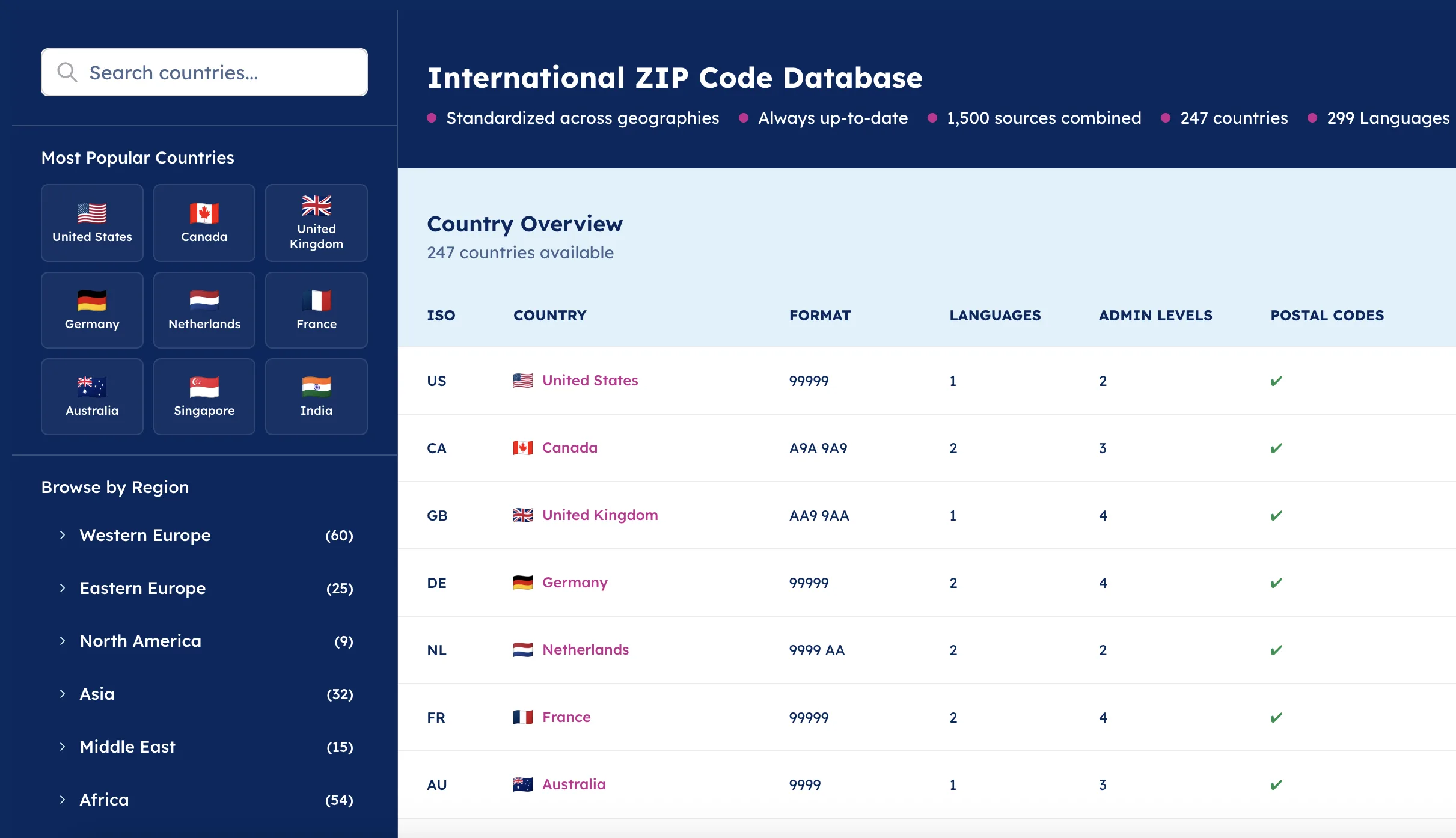

| Dimension | Internal build | Licensed dataset |

|---|---|---|

| Data sourcing | Multiple providers, inconsistent formats, and variable quality — including unreliable open-source datasets | Curated sources from authoritative providers, generally normalized into one structure |

| Hard-to-source geographies | China, India, Russia, and similar markets require specialist local knowledge most teams don’t have | Global coverage, some providers include hard-to-source geographies, maintained by dedicated specialists or integrated form reliable third-parties |

| Schema consistency | Each new country or source introduces structural variation — hierarchies, languages, scripts, and encoding all differ | Global address formats normalized into a unified schema, consistent administrative levels across countries |

| Update cadence | Dependent on internal capacity — often delayed, leading to silent data quality degradation downstream | Committed update cycles, well documented and supported with quick patches if needed. |

| Governance burden | Requires dedicated roles: data engineers, Geographic Information Systems (GIS) experts, country specialists, legal review for source licensing | Governance centralized in one vendor relationship, single point of accountability for quality and provenance |

| Long-term cost | Maintenance, governance, and expansion costs compound over time. The majority of total cost of ownership will be the ongoing maintenance. | Predictable, fixed-cost infrastructure input, no hidden escalation as the business scales to new geographies |

| Compliance exposure | Licensing restrictions on open-source data and outdated entries in regulated markets like Brazil create direct exposure | Commercially licensed, always up-to-date data aligned with national postal regulations across all markets |

The open-source trap

Open-sources like GeoNames or OpenStreetMap (OSM) are the first stop for teams evaluating an internal build. They’re accessible, broadly referenced, and cost nothing to download. But free data carries real tradeoffs. Gaps in coverage, inconsistent updates, variation across countries, and no accountability.

Monster, part of Randstad Group, experienced this directly. Before licensing their location data, their team relied on GeoNames for address matching. The data was available; it just wasn’t accurate or consistent enough to depend on at scale.

“My team was tasked with putting together a new in-house location database. During our first try we used Geonames. As we used it more, we discovered it wasn’t heavily curated. It had very patchy coverage of postcodes with varying accuracy across geographies. For us it was very important to have postcodes to make location data accurate, so we decided to delegate into an expert provider.” – Nick Beaugié, Senior Software Engineer.

Hard-to-source geographies compound the problem

High-quality postal data isn’t accessible across all markets. Hard-to-source geographies, such as restricted markets, need specialized sourcing strategies.

In China, for example, there is no official open-access source for postal code data. The Chinese government restricts the export of location data. Postal codes don’t always align with administrative changes. Address transliteration adds further complexity — the same Chinese characters can have multiple valid phonetic representations.

In Russia, the official source is the Federal Information Address System, managed by the Russian Tax Ministry. It contains no geocoordinates. The data is in Cyrillic only. Converting it to Latin script requires a custom transliteration process. Each Russian federal subject carries its own administrative division structure. Open data quality in Russia is generally poor.

Other examples include Germany, where official address data exists but isn’t published openly.

Across much of Africa and Southeast Asia, there is no synchronized authoritative source at all. Data is fragmented, inconsistently published, or simply absent.

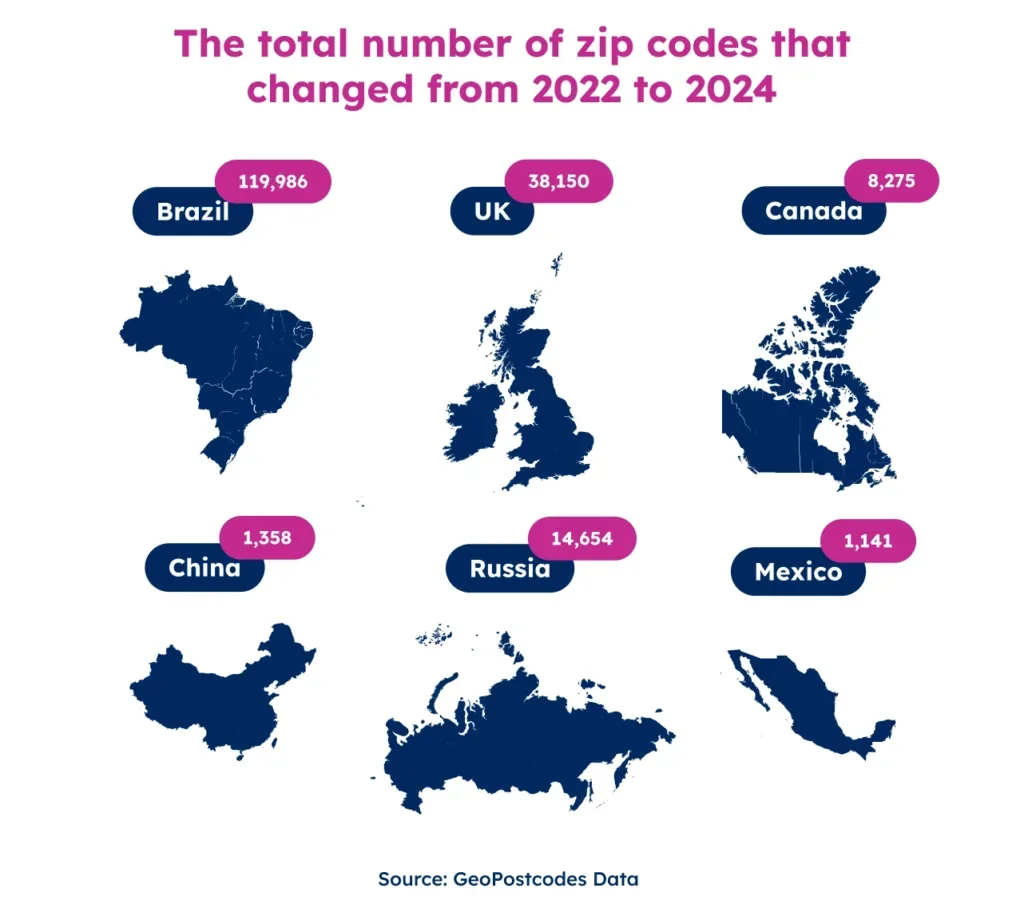

High-volume markets: the data changes faster than most teams can track

Some markets, such as the UK, Canada, Brazil, and the Netherlands, sit at the other end of the spectrum. The UK alone has 1.8 million postcodes. Each one covers around 15 addresses on average. The system sees more than 10,000 changes per quarter. That’s roughly 120 additions, deletions, or boundary modifications every day.

Canada operates at a very fine level of granularity, with hundreds of thousands of postal codes, often assigned to a single building, block, or high-volume recipient, and updated on a roughly six-week cycle by Canada Post.

Several other markets follow the same pattern: highly granular systems with large volumes of postal codes and high rates of change.

For an internal team, high-volume markets create a different kind of burden. The data isn’t always hard to find. It’s hard to keep current. Missing a quarterly update cycle means data degradation across every system that depends on it.

Multi-source stitching creates structural fragmentation

Data teams end up combining several sources to fill coverage gaps, such as national postal operators, open-source repositories, national statistical institutes, or local third-party providers.

Each source applies its own structure. Column names differ. Administrative hierarchies don’t align. What one country calls a “district” functions like a “municipality” in another and a “borough” in a third.

Mexico illustrates this problem clearly. The country has two official sources: Correos de México, the postal operator, and INEGI, the national statistical office. Both are authoritative, but their postal code assignments differ. Companies working in Mexico regularly report difficulty reconciling postal codes between datasets.

These issues cascade into pricing engines, address validation workflows, and BI reporting layers.

The “free” label doesn’t mean free to use commercially

There’s also a licensing dimension teams tend to overlook. Some sources that appear open carry meaningful restrictions that only become visible under legal scrutiny.

GeoNames, one of the most widely used free repositories, is published under a Creative Commons Attribution license, meaning any commercial product built on it must credit the source, which isn’t always compatible with how enterprise systems are structured.

OpenStreetMap operates under the Open Database License, which includes a share-alike clause: any database that incorporates OSM data must itself be released under the same open license.

For a company building a proprietary internal reference dataset or embedding location data in a commercial product, that clause can effectively prohibit the use entirely. These aren’t obscure edge cases; they’re the terms attached to the two most commonly reached-for free sources in the market.

Integration becomes a governance liability

Once a team has sourced and reconciled data for an initial set of countries, a new problem emerges. The data model built for a limited scope doesn’t scale globally. Reliable global ZIP code data is difficult to build and even harder to sustain at scale.

Every new country introduces new administrative structures, encoding requirements, and new scripts. Cyrillic, Arabic, and Chinese characters don’t map onto schemas designed for Latin-alphabet data. A structure that worked for Europe or the US shows strain the moment Russia or India enters the pipeline.

Every schema change requires coordination with the downstream teams that consume the data. Each update cycle creates a change management event. Each new country multiplies the number of stakeholders who must align on the revised structure.

What started as a project becomes a product. It needs dedicated ownership, vendor coordination, and governance documentation. None of that was in the original business case.

When governance slips, financial risk follows

Three categories of financial exposure emerge when that governance burden isn’t resourced adequately.

- Operational inefficiency from non-standardized data. When different business units consume conflicting location data, reporting outputs don’t align. Reconciling those discrepancies consumes analyst time and introduces uncertainty into planning and forecasting. Brizo by Datassential experienced the value of resolving this problem. Their team reduced market analysis time by 25% by licensing a standardized ZIP code and population dataset.

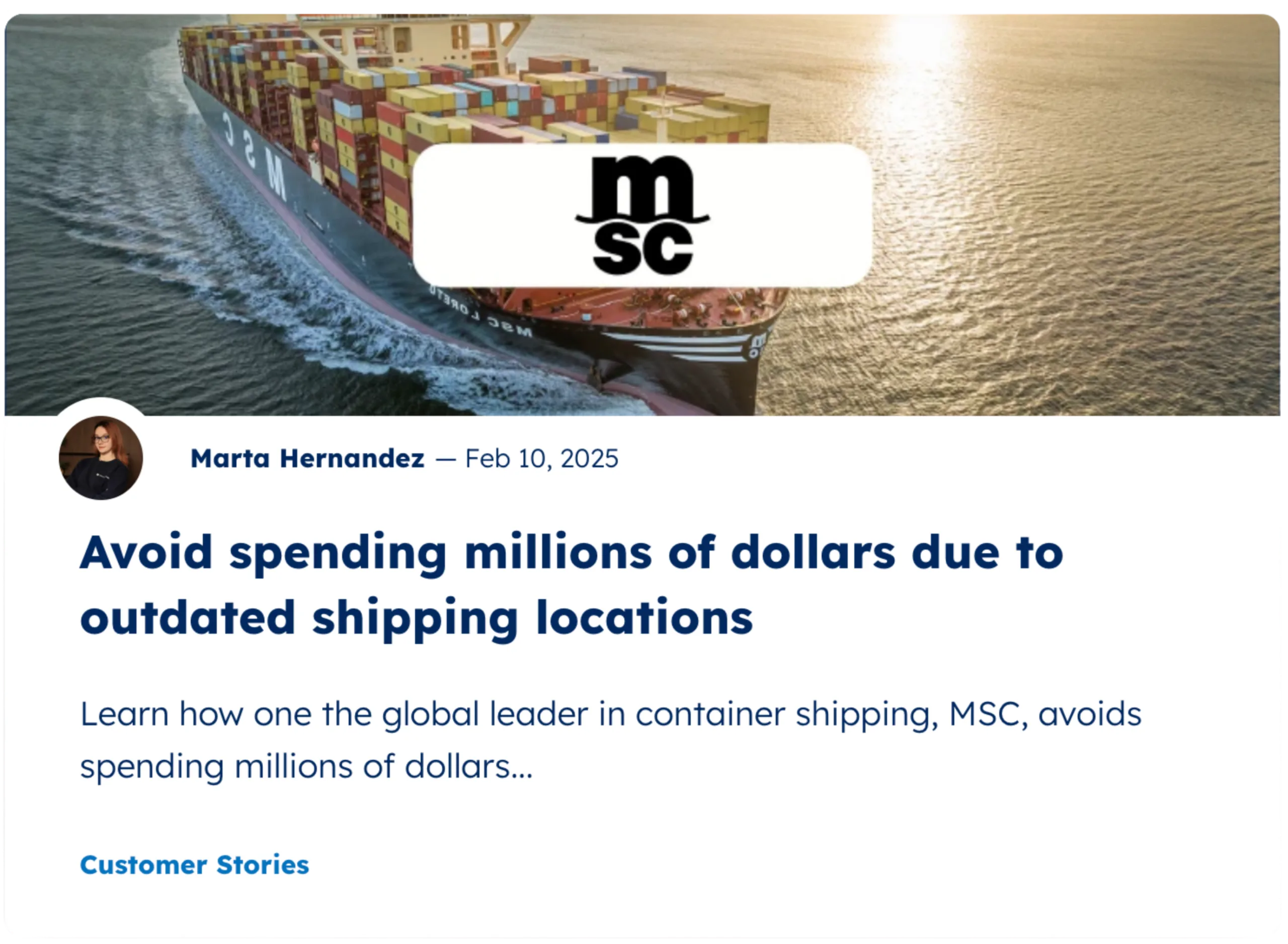

- Compliance exposure in regulated markets. Customs and trade compliance need precise postal code alignment with national regulations. Inaccurate or outdated location data will trigger direct financial and operational consequences. MSC highlights that even a single incorrect location entry requires corrections that can incur multimillion-dollar costs in high-volume ports like Shanghai.

- Governance opacity at scale. Managing many sources is difficult to document and audit. Data lineage grows opaque. Update provenance becomes unclear. Being unable to prove data quality when compliance, legal, or audit questions arise is a risk. MSC addressed this challenge by moving away from fragmented data management, eliminating repetitive processing, and saving approximately €500k annually in operational costs.

The compounding cost of ownership

The economics of an in-house database look very different at year one versus year three. Initial build costs are visible and bounded. Maintenance costs are ongoing and tend to grow.

The roles involved and the ongoing commitment they represent

A realistic cost model for in-house maintenance involves many different roles.

- Geographic Information System (GIS) Experts — to identify sources, provide geographic expertise, and country-specific judgments

- Data Engineers — to source, transform, and load data across many providers

- Data Governance Leads — to manage update cycles, schema consistency, and change documentation

- Country or Regional Specialists — to validate local formats and licensing constraints

- Legal and Compliance — to audit source licensing terms and commercial use rights

- Vendor Management — to negotiate, renew, and watch contracts with many local providers

None of these roles is a one-off, and the cost of hiring people responsible for that work adds up fast. At a minimum, maintaining a global postal database in-house requires 4 or more FTE’s with Data Engineering and GIS expertise.

That’s a floor of $240,000 to $360,000 per year, and it doesn’t include the cost of setting up the database, establishing sources, running quality assurance, or standardizing data formats.

Add those, and the true cost is closer to 2.5x that figure. Gartner estimates that poor data quality costs large enterprises $15 million per year. The maintenance overhead is only part of that exposure.

To put it into perspective, last year our team at GeoPostcodes processed over 13.4 million record changes. Those changes came from over 1,500 authoritative sources, meaning an in-house team would need to monitor around 82 sources every month just to stay current.

Mediterranean Shipping Company (MSC) understood this cost structure. Their data governance team manually reviewed and updated location data using open sources. One wrong entry could trigger booking failures, customs delays, or financial penalties. The risk was constant.

Replacing that process with a single standardized dataset saved MSC approximately €500,000 per year. It also freed more than 900 hours of team time per year.

“Any UNLOCODE or ZIP code modification can lead to system blockages, disrupting operations. Resolving such issues required a process that can incur multimillion-dollar costs, particularly in high-volume hubs like Shanghai. Thanks to GeoPostcodes, all ZIP codes in the region are now fully aligned with global UNLOCODEs, ensuring seamless transportation and compliance.” – Kavian Ranjbar, Data Governance Specialist at MSC.

What an enterprise-ready location data model looks like

An enterprise-grade approach to location data has four defining characteristics. An internally maintained build rarely achieves all four at scale.

Centralized accountability. A single licensed dataset means a standardized schema, ensuring data quality and efficiency. When data conflicts arise, there’s no ambiguity. You know which source to trust and who to contact.

Standardized structure across geographies. Address formats, administrative hierarchies, and coordinate systems map to a unified structure. When a new country gets added, the structure of the data doesn’t change.

Regular updates to reflect the world’s changing geography. A licensed database has a documented update cadence. Data arrives ready to consume, on a predictable schedule, with documented change logs. The burden of monitoring source freshness moves from your team to your vendor.

Coverage for hard-to-source geographies. New markets can be added without rebuilding sourcing workflows. That advantage grows as the organization scales.

The underlying principle is simple. Location data should be something enterprises set, embed, and forget. Integrated into core systems once. Maintained by specialists. Reliable without ongoing internal investment. That frees data teams to focus on work that generates competitive advantage.

Conclusion: The decision frame

Building and maintaining a global postal database creates a permanent financial and governance obligation. Risks show up as operational failures, reporting inconsistencies, and compliance exposure. Licensing your location data converts that exposure into a predictable, fixed-cost infrastructure input and centralizes ownership of the data.

GeoPostcodes provides the most comprehensive global location database. We empower Fortune 500 companies such as Amazon and DB Schenker to run address validation, distance calculation, and map visualization at a global scale.

One vendor. Every country. Trusted location data, worldwide. Explore our global postal database or sign up to evaluate our full data offer.